A recent report from Brandon Hall Group on alignment and measurement of learning paints a grim picture. Researchers found only 6% of organizations link learning to business results, and most don’t even try to measure it.[1]

A recent report from Brandon Hall Group on alignment and measurement of learning paints a grim picture. Researchers found only 6% of organizations link learning to business results, and most don’t even try to measure it.[1]

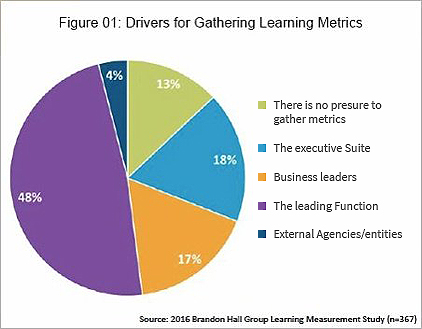

At face value, the survey results show that business leaders care little about measuring learning impact. Most of

the desire to measure learning comes from the learning function itself (Figure 1).

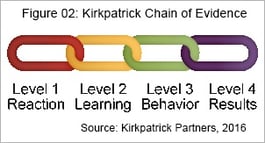

Measures at Kirkpatrick Levels 1 and 2 are commonplace (Figure 2), but few organizations go beyond reaction and learning captured by surveys and smile sheets.

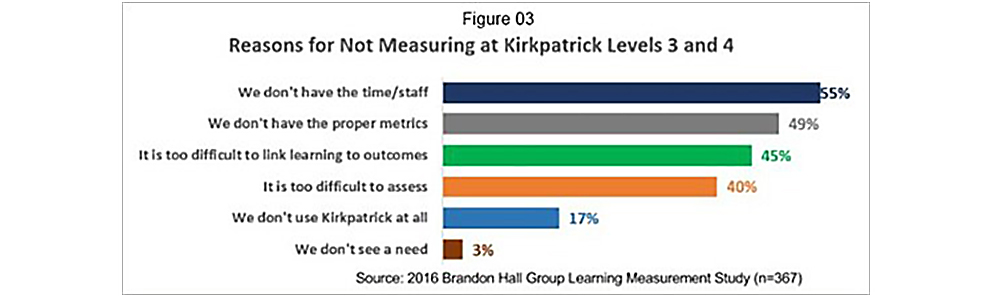

Companies most frequently cited lack of time and staff as the reason they don’t measure learning beyond the basics, but the next three reason indicate a lack of ability (Figure 3). They don’t know how to get the measurement done.

The BHG report concludes that businesses should focus on results, not ROI. We agree with the conclusion that

limiting metrics to ROI doesn’t capture the value to the firm, and, in our experience, it can lead to a self-defeating cost-cutting mindset.

The reasons companies don’t measure business results bring us to one conclusion: it’s too hard. If measuring experiential learning were easy, everyone would. What guides the decision regarding what to measure is how difficult data capture can be for business users.

The learning industry can capture a lot of data about formal training, but the most valuable learning is informal and experiential. Catching course completions when learning takes place in a classroom or online is easy. However, learning gained through a task, stretch assignment, or a conversation presents challenges unless we apply critical thinking to how we create and collect data.

The problem with learning and talent management software is that almost every activity requires human interaction. Tracking experiential activities adds to the workload for both employee and manager. Documenting a coaching session in development software takes time, so the human tendency when a coaching session is over being to move on to the next task. As a result, it’s as though the event never happened.

The real reason companies don’t collect data on experiential learning is that software has not caught up with the need.

In today’s interconnected world, data integrations are ubiquitous. Data connections have been standardized, protected with bank-level security. Nearly every commercially-available enterprise software platform has connection capability built in. Slack boasted 17 connections a year ago, and we are sure the number is growing.

With collaboration tools like Huddle, Slack, and SharePoint, we now create vast amounts of data about experiences. Our calendar and email tools create data points every time we schedule a meeting, assign a task, create a work team, or collaborate on work product. These activities generate valuable information about learning opportunities.

Appointments, tasks, team sites, and assignment rotations can be configured or customized to capture additional data in seconds. We can build integrations to collect data and bring it into talent management platforms as part of an employee development record. We can analyze the data to provide insight into learning and its value -- with the technology that exists today.

Who will lead the measurement revolution?

For more information about integrations see our article about Integration Strategy for Cloud Applications.

References:

1.“Learning Measurement 2016: Little Linkage to Performance.” Brandon Hall Group. 2016.

If you are still struggling with measuring learning impact, you are not alone. Brandon Hall Group’s research summary Learning Measurement 2016: Little Linkage to Performance by David Went worth paints a gloomy picture of the current state of measurement in learning. The report concludes that only 6% of respondents in their study are linking learning to performance.

The conclusions in the report give us more questions than answers. While the responses give us a good idea of the general state of measurement, what the answers mean in individual cases would require in-depth study in each organization.

Here’s our take on the conclusions of the report:

Most of the Need to Measure Learning Comes from within the Learning Function.

We have been led to believe by most pundits that top executives are demanding ROI measures, but the study shows that only 35% of the pressure for measurement is coming from the executive suite or business leaders.

The report doesn’t tell us what other dynamics are at work. Executives may have despaired of getting performance linkage from L&D, and in many companies, individual business functions are managing their own learning. They contract learning initiatives with one purpose in mind – improving performance. Their gains show up in their KPIs but have nothing to do with what is going on in L&D.

Still, if participation in training programs for learning measurement is any sign, there is a strong desire to measure learning outcomes. We are confident that those L&D leaders who apply themselves diligently to learning measurement will prevail.

Companies are struggling to measure informal and experiential learning.

Measuring information and experiential learning can be difficult, but the means and technology are available to make it easy. The difficulty lies in balancing burdening learners and their managers and getting their assessment of results. Quick, easy mobile surveys are now simple to deploy and track.

Line-of-business leaders are accustomed to forecasting and estimation, and if they are involved in learning programs and invested in the results, they will help. The solution is to engage them in initiatives from the outset.

One tendency that gets in the way is the irrational need for perfect measurement. Many people labor under the assumption that if you measure something, it must be exact to be meaningful. That is far from true. In scientific inquiries, estimates are all we have, and they work well. The measurements used in the moon landings of the 1960's and 1970's were estimates. Eratosthenes (ca. 276-194 B.C.) estimated the size of the earth within 3% by measuring shadows.

Few companies measure beyond Kirkpatrick levels 1 and 2.

No mystery here. This is where the easily measurable activity takes place.

Organizations use mostly basic metrics and few outcomes to measure learning.

Basic metrics are easier to collect, and most teams are busy enough without managing additional data collection efforts. As many organizations learned during the quality movement of the 1980s, you can become overwhelmed when you try to measure too much. Measuring what matters is enough.

ROI is not a top driver of measurement.

We agree with Wentworth that ROI is not as important as learning impact. What CEOs care about is performance. What doesn’t belong in the company’s income statement may still be an important measure for making business decisions. An estimate of business impact is sufficient, and sometimes intangibles can be substantial factors in decisions.

You need not be alarmed if you are still struggling with learning measurement. You are not alone.

By aligning learning to business, you will find over time that the help and resources you need will be available. We recommend that you embark on an alignment journey by building alliances with line-of-business leaders. Work with them to use learning to solve their performance challenges. Use their performance measures to show the value of learning.

Get a head start on alignment by downloading our free e-book How to Align Learning to Business.

References:

Advances in learning technology, brain science, and teaching methods over the past few years have created new possibilities for developing knowledge and skills in the workplace and not a moment too soon. A majority of respondents in a recent McKinsey study expect significant changes in corporate learning over the next three years. The demand for online learning at the speed of business is growing in the quest for organizational agility. [1]

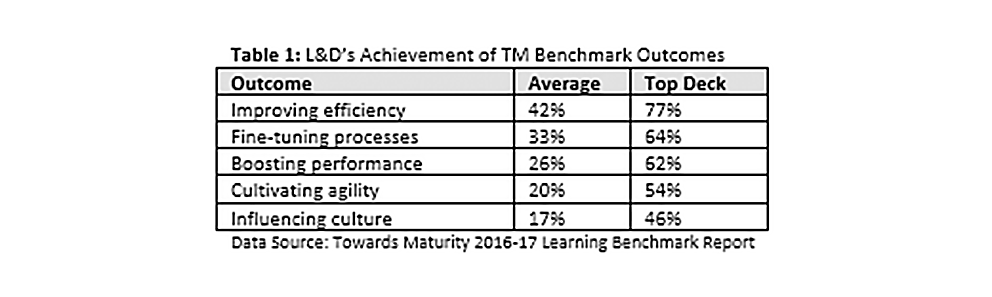

In many of these enterprises, the gap may be more due to lack of measurement that poor execution. What gets measured gets noticed, and few L&D organizations measure the impact of learning on the business. It's hard for CLOs to measure what they don’t manage, and most of the data they need live outside of their control. Because senior leaders and line-of-business managers do not see information about the impact of learning, they may have only a vague notion that the connection exists. For most organizations, the road ahead is challenging. According to McKinsey, less than half of L&D organizations align themselves with corporate priorities to help their organizations meet strategic objectives. Towards Maturity’s 2016 benchmark survey of over 5,000 organizations reported shortcomings in L&D’s achievement of five essential outcomes, and contrasted the average assessment with that of Top Deck (top 10%) organizations.

If L&D wants to show the impact on the business, they will have to make the first move toward a partnership in planning, executing, and measuring learning outcomes, and that means devising measurement models where metrics don’t yet exist.

Much of today’s learning happens outside the LMS. Most organizations do not yet have sophisticated methods for capturing offline and informal learning. Control groups are almost always impractical, and historical data for trend line analysis rarely exists. Fortunately, expert estimation is easy and inexpensive to implement.

Using the expert estimation approach to measurement does not mean you gather people together and have them guess at results. Estimation is a learned skill. In learning programs, the experts can be the participants. They may be the most knowledgeable and will have a vested interest in the impact on their performance. Their estimate will likely be credible in the eyes of management.[2]

To be successful, you will need to lay the groundwork for the measurement effort.

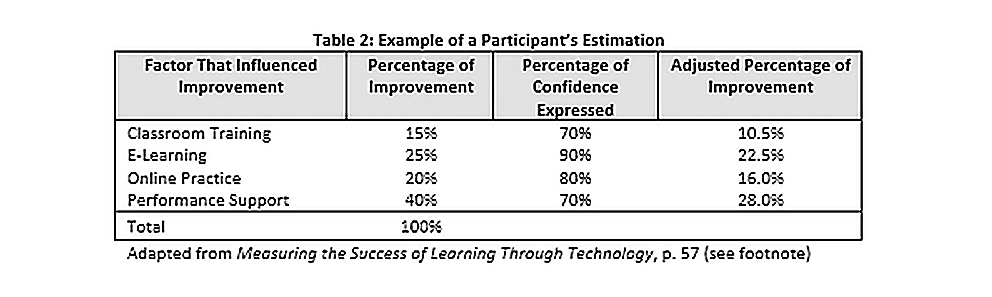

Estimations are a percentage of improvement and the degree of confidence in the estimate. Let’s take an example of a learning initiative to improve customer service with blended learning and performance support. Suppose a participant estimates that 30% of the improvement in performance is due to a single factor and expresses a 90% confidence in the estimate, the adjusted percentage of increase in that factor is 30% X 90% = 27%. If the monetary value of the gain is $100,000, the value of that component of the learning intervention is $27,000.

Developing participants into better estimators does not require a substantial investment in training. Udemy has a two-hour course online on “How to Estimate Anything” for $10, and there are hundreds of other online courses available.

Every business runs on estimates. Even when we make decisions on “gut instinct,” we are calculating risks and probabilities, sometimes unconsciously. Helping people to become better estimators can often be a matter of explaining the principles.

Here’s an example. Hold one arm straight out in front of your body with your thumb pointing straight up. Now, how far is your thumb from your nose? How confident are you that it is less than six feet and more than six inches? You would probably say you are 100% confident. Now, how confident are you it is 28 inches and less than 32 inches?

By starting with a large range of values and gradually reducing it, you can reach a point where you have substantial confidence in a narrow range of values. When you express an estimate in terms of confidence in a range of values, people immediately grasp the value of the estimate and are willing to act on the estimate.

Other measurement methods may be more accurate, but expert estimation, used wisely, can get the job done faster and at much less cost. It may be the key that opens the door to building a learning organization.

References :

2. Elkeles, Tamar, Patricia Pulliam. Phillips, and Jack J. Phillips. Measuring the success of learning through technology: a step-by-step guide for measuring impact and ROI on e-learning, blended learning, and mobile learning. Alexandria, VA: ASTD Press, 2014.

In a previous article, we discussed the possibilities of using the same tools we use for an end of course assessment for measuring the impact of learning. Today we want to expand on that idea to show you some of the ways you can evaluate learning using the tools in your LMS.

While the pundits and consultants rave about advanced analytics, artificial intelligence, and machine learning, almost all the information consumed in business today is operational reporting.

That doesn’t mean that LMS reporting has stood still during the digital transformation. We now have many new ways to access information. Instead of quarterly reports, we now have real time interactive visualizations, and embedded analytical engines in our learning management systems have made it possible for us to deliver real business intelligence immediately to the people who make decisions.

When it comes to evaluating learning, our operational reporting and our assessment tools form a powerful combination that can help us understand our learning activities at a profound level. The process begins with designing assessments, or surveys that produce valid, useful information.

You might be thinking at this point that we proved decades ago that end-of-course evaluations, or “smile sheets,” have little or no value in predicting improvements in performance. That is true. Meta-analysis of 34 scientific studies in 1997 found that smile sheets uncorrelated with learning results, and a similar, larger study in 2008 produced the same result.[1]

We first began trying to eradicate Likert scales and other fuzzy measures in the early 2000’s. We were working with organizations seeking to improve not only their learning standards, but also their performance management systems, development plans, and succession management practices. A lot of organizations were experimenting at that time, and our primary focus was using granular behavioral statements that eliminated as much bias and ambiguity as possible. It could be cumbersome.

We get better results when we can reduce questions to behavioral statements and ask the respondent to give us a yes/no response to each. The binary response removes the guesswork of scales or levels and focuses the respondent on the behavior rather than an ambiguous value. Then, we can present the percentage of responses to get a valid assessment.

Whoa! You say. Some things are more important than others we need a way to differentiate between what is merely okay and what is the most desired result.

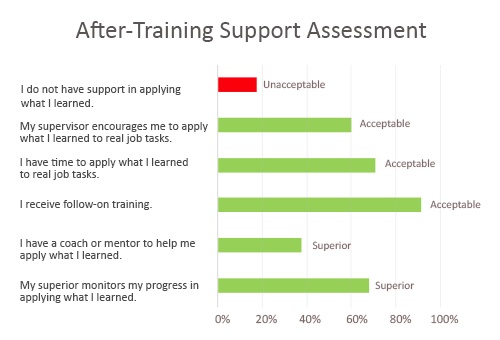

Thalheimer attacks this problem this by assigning an acceptability level to each statement, beginning with what is acceptable and what is not. We can then define other levels if we so choose. So, if we are evaluating the quality of after-training support, we could have something like this example:

One of the skills you learned in your recent training was ___________. Since ongoing training and support are essential to learning new skills, we want to know what support you are receiving in applying the training.

Check all that apply.

We can chart it like this:[3]

We can apply the technique to any stage of the learning process, from design to after-training support, but we can also use this to gauge impact on the business. Let’s take an example of upselling training for customer support staff. We can send a questionnaire to participants, and, at the same time, gather the same information from managers to further validate our findings.

You (your team) recently completed upselling training for our new product line. Please complete the following short questionnaire to help us gauge how effective the training was in helping you (them) to sell the product.

Check all that apply:

Here’s a little exercise for you. How would you assign acceptability levels to these items? Apply the Thalheimer labels if that fit or create your own.

We can apply the same methods to any situation where we have observable performance.,

If you don’t already have Thalheimer's book, we recommend you get it and give the principles a try. You have nothing to lose but your useless smile sheets.

References:

1. Thalheimer, William, PhD. "Performance-Focused Smile Sheets: A Radical Rethinking of a Dangerous Art Form." Kindle location 313. Somerville, MA. Work-Learning Press, 2016.

2. Thalheimer.

3. Thalheimer

Chasma Place is an independent source for solutions that will help you keep pace with changes in the way your people work without ripping and replacing your existing systems.

For years, industry leaders have been encouraging L&D to align itself to business and to measure and report on the impact of learning. We must admit, we spend lots of time on that soap box ourselves.

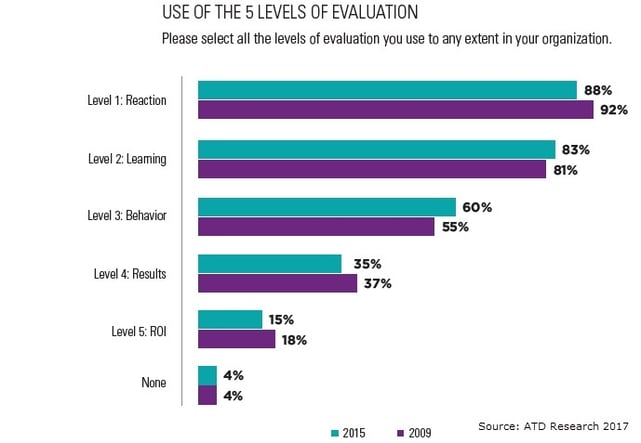

But if we take the latest ATD learning measurement survey as an indication, the profession has made no progress in measuring results or ROI. The study, conducted in 2015, actually showed decreases in those measurements since 2009.[1] It also shows us that most organizations still measure at Kirkpatrick levels 1 and 2. Only 36% of respondents said their evaluations were "helping to a large extent with meeting organizational business goals.

Why so little progress? The world of HR data analytics, including L&D, has become one of haves and have-nots. Big companies developed in-house analytics teams an iron consulting companies, while many others waited on the sidelines for analytics to become viable for them.

Analytics vendors jumped into the market, but most organizations still sat by waiting for industry leaders to prove the value of a significant investment in HR analytics. Vendors responded by working with HR analytics software vendors to embed their analytics engines into cloud HR platforms. Thus was born the idea of the “democratization of data.

The advent of embedded analytics created a new wave of hype, and in the last two years or so we have seen some progress, but the number of organizations that come to us looking to span the gap between analytical tools and usability continues to grow. Embedded tools are a far cry from a robust library of reports and visualizations, and many companies find they just don’t have the resources or the expertise to build them.

A host of conflicting agendas contributes to what Tracey Smith, author of many books on analytics, calls a fractured HR analytics world. She explains that with data scientists pulling in one direction and vendors in another, with some people advocating for regulation and publication of HR data and others clamoring for certifications in HR analytics, practitioners have lost focus. Smith encourages us to overcome this with focus and prioritization.

We wholeheartedly agree. We find, in our practice, that those issues melt away when we focus on the needs of internal customers. When you are trying to help the operational leaders in your business get better results, it doesn’t matter that data scientists think you need a Ph.D. to do analytics and vendors believe analytics ought to be for everyone. Nor does it matter that regulators and social scientists disagree on what information we should be collecting and how public it should be.

If you been a learning professional for longer than a few minutes, you know about “smile sheets,” the feedback forms we like to use at the end of an instructor led training course. Their purpose is to capture learner reaction at level 1 of the Kirkpatrick measurement model.

E-learning and SCORM standards have enabled us to take that simple tool and make learning assessments useful, and xAPI shows promise in enabling measurement of any type of learning. Every modern LMS has the tools to push out assessment questionnaires at any point in the learning cycle. We can create assessments to evaluate what takes place post-learning and whether formal or informal on-the-job learning is reinforcing classroom or online training. And we can request feedback from anyone at any time.

With that in mind, here a few recommendations for assessing the impact of learning on the business.

If you follow these steps, you will have all the data you need to show the impact of learning on performance and will be able to use the visualization tools in your LMS embedded analytics to show the result.

Creating those visualizations starts with asking the right questions. Here is a sample to get you started:

We hope we have shown here that you can measure the impact of learning without investing in more expensive technology. You can use the tools you have to get an excellent idea of how your learning and support programs are impacting business performance.

References:

1. "ATD Research Presents - Evaluating Learning: Getting to Measurements That Matter." ATD. April 2016.

2. Thalheimer, Will. Performance-focused smile sheets: a radical rethinking of a dangerous art form. United States: Work-Learning Press, 2016.

In our previous article on learning measurement, we discussed the need for you to have the right technology to support your needs. You LMS must be able to capture all the data points you need and present them in a way that meets the needs of your users. If your budget does not accommodate all your needs, a careful assessment will help you prioritize what you can do. Begin with evaluating the needs of your people.

Each role in your organization has unique needs, and to satisfy them you need to present information in a way meaningful to them. We can make certain assumptions, but we recommend you ask them what information they require and how they use it.

However, your information-gathering should go beyond what your users tell you. They may have needs they haven’t thought about or can’t articulate. Keep digging until you are satisfied you have exhausted the possibilities.

Evaluating your ability includes both the technology and your capacity to use it. Before you consider spending on technology, determine if your skill mix is holding you back. If it is, you may be better off upskilling with training or hiring. You might also want to consider hiring a consulting partner while you grow your capability.

If your challenge is that your LMS is not capable of capturing and reporting the information you need, consider whether an upgrade might serve you better than replacement. If you are using outdated SCORM technology, it may be time to move up to Experience API or cmi5 if your LMS is compatible. SCORM has been with us since 1999 and has served well, but it cannot keep up with today’s delivery modes. It does not have the means to capture events outside the LMS like one-on-one coaching. OIt is in widespread use and provided with most LMS platforms and authoring tools. It is easy to find help when you need it.

Experience API, or xAPI, is much more flexible, but it lacks packaging and structuring tools that SCORM has. You can use it to record any event, including using your calendar and mail systems to record coaching sessions.

Cmi5 is a new standard developed in the aviation industry and now managed by Advanced Distributed Learning. It is a bridge between xAPI and SCORM because it has the flexibility of xAPI, packaging and structuring tools that xAPI lacks.

Your needs will be unique, but we can suggest common features you might want to use as a baseline:

Customizable Reports. Your reporting solution should be customizable and flexible enough to provide any data point you need, now and in the future. There are many low-cost vendors whose reporting capabilities are limited to canned reports or limited customization.

A User Interface for Non-Technical Users. Your staff should be able to produce custom reports without programming. Features like calculated fields should be well-supported on-screen and easy for an ordinary business user. You may need to handle scheduling or FTP file transfers, but these should be in an easily understandable user interface.

Customizable Interactive Charts and Graphs. Creating any chart or graph, including pivot tables, should be within the capability of a tech-savvy business user. The displays should be interactive and easy to manage without technical knowledge.

Customizable Dashboards. If your reporting tool meets all of the above requirements, you will want to create a set of executive graphical reports you can display on a dashboard. We can tell you from our experience that each of your executives will want to configure a unique view of the business.

Integration Tools. Managers and executives will not want to see learning reports in isolation. They will want to see the data in relation to the aspects of the business they manage. They will need to juxtapose learning with other factors like performance and cost trends. Managers will want to see skill gaps and performance metrics on the same display. Your reporting tools should be able to send data to other systems with no programming.

If your LMS meets your needs except for reporting, consider whether an analytics platform such as Cognos, Crystal Reports, or Tableau will meet your needs at a lower cost. Work with a trusted analytics partner to assess your needs.

If you decide you need a new LMS, investigate the reporting capabilities of each candidate thoroughly. We explored several of the popular online software selection services, but none of them listed reporting as a criterion for LMS selection. We think it should be the first consideration – a data system is only as good as your ability to retrieve information from it.

For help with building your business case, read our free e-book on Building the Business Case for a New Learning Management System. It will help you form the relationships you need to get your project approved.

One more bit of advice: don’t be lured into thinking you need to make a substantial investment in predictive analytics. It requires a significant commitment and is only useful if your business needs complex forecasting tools.

We hope this overview has helped you to form the right questions in our mind about your current capability and what you need to do about it. We encourage you to explore our blog for more articles on learning and analytics.

Pixentia is a full-service technology company dedicated to helping clients solve business problems, improve the capability of their people, and achieve better results.